Recently, I realised that there were currently five sites undergoing excavation, all in Sussex, that had targetted geophysics I had done. With my ego approaching dangerously unsafe proportions, I resolved to go on a quest, to visit all five sites in one day, and make a blog post about it. All of these sites have volunteer positions, so if you fancy a dig next year, you can join up and join in.

Site 1: Priory Park, Chichester

After the

GPR survey in 2015, and a successful

first season of excavation in 2017,

CDAS returned for a second season of excavation looking at the east site of the bath house. The excavation trench was much bigger this time, and they are looking for the connection between the bath house and the Roman town house to the south. There are what seem to be robbed out walls connecting the two, which need to be confirmed on the ground. Judging by the GPS survey, the new trench covers part of last year's excavation, and the entire bath house.

Priory Park excavations, 2017 and 2018 trenches

When I visited, they were only half way through the excavation, so the layout of the building wasn't completely clear yet. The picture below is of the re-cleared area from last year, showing pilae, with some floor still surviving, sticking out of the baulk. I gather from later reports that the building was not completely excavated by the end of the second week, but they had certainly made progress. Some of the pilae seemed to extend out of the south wall, which was very odd, as no additional rooms appeared on the GPR. Perhaps the building was bigger than the tiny footprint I had found.

Priory Park bath house

Site 2: Rocky Clump, Stanmer

This is a Romano-British farmstead site on the Downs north of Brighton that has been under excavation for many years by

BHAS. Historically, the excavation started in the clump itself, then in more recent time, moved north of the clump, and then after

some geophysics, excavations have moved to an enclosed settlement to the south-east of the clump. The currently open trench, which is tacked onto the east edge of the last trench, covers several ditches within the settlement, in an attempt to find evidence of occupation.

Rocky Clump excavations and ditches found so far

As you can see, a number of ditches have already been found. The feature in the corner of the inner enclosure turned out to be a solution hollow. Some of the ditches have a high flint content, seemingly placed in such a way as to suggest that their placement is deliberate. Could it be to support something above? If so, why the need for a ditch in the first place. As ever Rocky Clump excavations providing as many questions as answers.

North ditch under excavation

Flint layer on top of inner south ditch

I also resolved to investigate further the area between the current excavation and the clump. There are a number of large features on the earth resistance and radar where it is not quite clear whether they are archaeological or geological. I tried radar as a further complement to the earlier surveys, which managed to answer the question. The geological features are all clay-with-flints, and there is enough moisture left in the ground, despite the hot weather, to render the clay inpenetrable to radar. These are easy to spot in the results. There is a strong signal up top, then a blank area below, as the signal is attenuated by the clay. This contrasts nicely with the surrounding messiness of the chalk, which is visible to a much greater depth.

Clay with flints in a solution hollow surrounded by chalk

One of the features did turn out to be much more interesting. Situated right next to Rocky Clump itself, was a circular feature. It is barely visible as an amplitude change, but is very clear as a phase change. It has a shape of a shallow, round bowl, and my best interpretation is a dew pond. There are plenty of animal bones at Rocky Clump, so it makes sense that there is a water source for the animals, if it is Roman in date.

Vertical section across the centre of the possible dew pond

Possible dew pond, top left

Site 3: Plumpton Roman Villa

Ok, for this site, I only set out the grids and processed the data. The survey itself was done by someone else. That still counts, right? The site has been under excavation by the

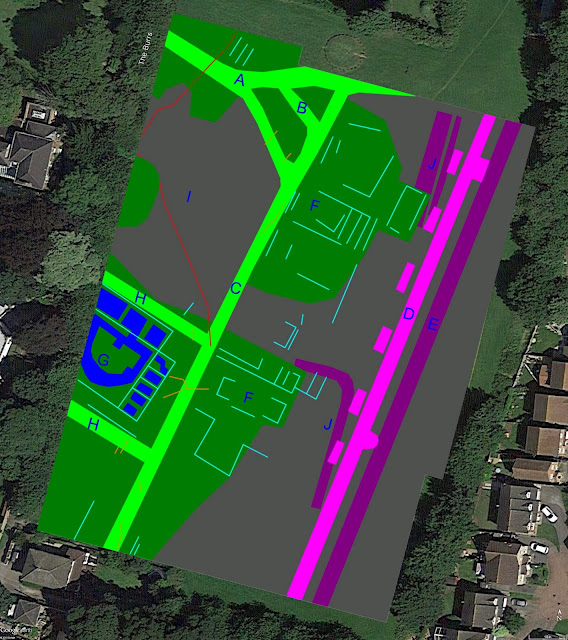

Sussex School of Archaeology for a few years now, starting at the east end of the villa building and working west. The western end, which is now under excavation, seems to be interpreted as a bath house. Below is the current excavation trench, overlayed on earth resistance of the site. Some of the southerly walls do not show up well on the geophys, as there is a higher content of chalk there compared to the use of flint in the north. I have not recorded the more substantial western end in the plan below, as that is still to be fully revealed by excavation.

Plumpton excavation trench and exposed walls, 2018

View from the south, looking as the chalk wall foundations

More substantial foundations on the north side

Site 4: Barcombe Roman roadside settlement

I started surveying this massive site

back in 2011, and it is currently under excavation by the

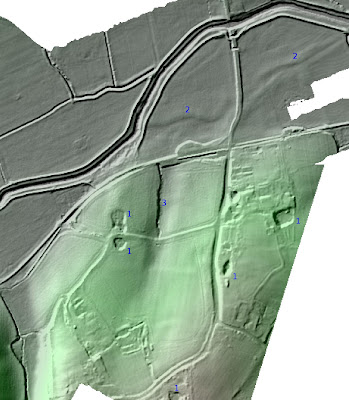

Culver Archaeological Project. This year, they moved to the centre of the defended settlement, in the hopes of finding the footprints of buildings. What postholes they have found so far don't quite resolve themselves into buildings yet. Instead, there seems to be a lot of kiln features, though whether these are industrial features, or something domestic, like corn drying, is not quite clear yet. You can see the current excavation area highlighted in the images below. If we change the display bounds to a higher value, it makes it easier to see which features are more likely to be industrial in nature. The black demolition layer visible in other trenches is also present here, so it must have been quite an event. What's left of the road surface is very close to the surface, so no surprise it is being plouged away. The trench is a rather large 20m x 45m, so they certainly have a large area to investigate. They will be back here next year.

Magnetometry, 3nT display boundary

Magnetometry, 20nT display boundary

One of the kilns. Site supervisor for scale

Site 5: Jevington Church

Jevington are extending their churchyard, and I was asked by

ENHAS to survey the area ahead of an investigation. The magnetometer was pretty useless in such a small fenced field, but the GPR came up with some results, despite problems with the encoder wheel, thankfully now fixed. At the northern end of the field seemed to be an old track, leading to the church, surrounded by some solid features. I originally thought these might be grave furniture, but one of them, under excavation, turned out to be the rather boring concrete footing for an old fence post. The track itself was composed of gravel. Further to the south, the tenuously identified plague pit turned out to be a scatter of neolithic struck flint, which was much more interesting than a fence post.

Some of the features found at Jevington and the trenches opened to investigate them

Concrete post footing

Neolithic flint scatter. Photo courtesy of Martin Jeffery